Have you ever wrapped a massively expensive corporate video shoot, only to sit in the editing bay and realize your primary camera angle completely missed the mark? Or perhaps you’ve poured thousands of dollars into rendering a 3D product demo, only to have the engineering team change the product specs, forcing you to start the agonizing rendering process all over again.

If you work in corporate communications, marketing, product development, or training, you are likely intimately familiar with the rigid, unforgiving, and incredibly costly nature of traditional video and 3D production. Once the pixels are baked, you are trapped in a two-dimensional box.

Right now, the internet is obsessing over AI-generated video tools. But while generative AI is busy creating slightly hallucinogenic 2D clips, a quieter, exponentially more profound technological revolution is taking place in the realm of spatial computing and volumetric capture. It is called 4D Gaussian Splatting, and it is quietly pushing the boundaries of what is possible in digital media, transforming our flat screens into explorable, photorealistic environments.

Why Volumetric Video Matters to Your Daily Corporate Workflow

You might be thinking, "I work in B2B software sales or corporate HR. Why should I care about high-end visual effects technology used in Superman movies?"

The answer is simple: spatial computing is the next major computing paradigm. Just as the transition from desktop to mobile completely rewired how we conduct business, the transition from flat screens to immersive environments (driven by devices like the Apple Vision Pro and Meta Quest) will rewrite the rules of corporate engagement.

Imagine a world where:

- Corporate Training: Instead of watching a flat video on safety protocols, manufacturing employees can step inside a 1-to-1 digital twin of their actual factory floor, walking around a dynamically recorded supervisor to see exactly how a machine operates from any angle.

- B2B Product Demos: Instead of sending a PDF or a pre-rendered video to a prospect, you send an interactive, photorealistic 3D scene of your heavy machinery in action. The client can physically walk around the moving equipment in their own living room using augmented reality.

- Executive Communications: Your CEO records a quarterly update once, but that address can be deployed as a 2D video on the intranet, a holographic projection in regional offices, and a fully explorable VR experience for remote workers.

We are moving away from traditional polygon-based modeling and restrictive video formats toward living photography. Let’s break down exactly how this technology works, how it evolved, and the practical ways your organization can leverage it to stay ahead of the curve.

The Evolution of Spatial Capture: From Pointillism to Neural Rendering

To grasp the magnitude of 4D Gaussian Splatting, we first need to understand how we got here. The journey is a masterclass in solving massive computational bottlenecks.

The Pointillism Analogy

Think back to art history class and the concept of pointillism—paintings made of thousands of tiny, distinct dots of color. From a distance, the dots blend together to form a highly realistic image. But when you walk right up to the canvas, the illusion shatters into a chaotic cluster of individual paint drops.

3D Gaussian Splatting is essentially the digital, three-dimensional version of pointillism. Instead of a flat canvas, imagine millions of tiny 3D blobs floating in digital space. These blobs are called "Gaussians." Each individual Gaussian contains specific, hard-coded data points:- Position: Its exact X, Y, and Z coordinates in space.

- Shape: How it stretches or compresses.

- Color: The specific RGB values it emits.

- Opacity: How transparent or solid it is.

When you stack millions of these mathematically defined ellipsoids together, you generate a photorealistic 3D scene that a user can freely navigate.

The Problem with Neural Radiance Fields (NeRFs)

Before Gaussian Splatting became the industry darling in 2023, the cutting edge of neural rendering was the NeRF (Neural Radiance Field). NeRFs were revolutionary because they could take a handful of standard 2D photographs and use a multi-layer perceptron (a type of neural network) to predict and build a 3D scene.

However, NeRFs had a fatal flaw for enterprise applications: they were painstakingly slow. To render a view, the computer had to query the neural network for every single pixel along a camera ray. This volume rendering technique meant it took several seconds just to generate a single frame. In a corporate environment where you need real-time interaction (typically 60 to 90 frames per second), waiting 10 seconds for the camera to pan across a digital room is unacceptable.

The 3D Gaussian Breakthrough

In 2023, researchers at Inria made a pivotal shift. They asked: What if we skip the heavy neural network entirely during the rendering phase?

Instead of asking a machine learning model to guess what a pixel should look like in real time, they explicitly placed millions of these Gaussian splats into a 3D space and rasterized them directly. Modern Graphic Processing Units (GPUs) are incredibly efficient at rasterization.

Pro Tip for IT Leaders: Rasterization is the process of taking 3D models and converting them into 2D images on a screen. Because GPUs have spent the last three decades optimizing this exact process for video games, shifting from volume rendering (NeRFs) to direct rasterization (Gaussian Splats) unleashed massive performance gains.

The result? The rendering speed skyrocketed from a sluggish fraction of a frame per second to over 100 frames per second at full HD resolution on a standard commercial GPU. Real-time, photorealistic 3D environments were suddenly viable.

But there was still one massive limitation. The scenes were entirely frozen. They were brilliant, high-fidelity still-lifes. To capture the real world—a world brimming with movement, speaking humans, flowing liquids, and dynamic lighting—we needed a fourth dimension.

Enter the Fourth Dimension: Mastering Time

How do you add the dimension of time (the "4D") to a scene composed of millions of floating mathematical blobs?

The Brute Force Failure

The immediate instinct is to treat it like a traditional flipbook or digital video. In standard video, you play 24 to 60 individual pictures per second to create the illusion of motion.

If you attempt this with Gaussian Splatting, you have to store a completely new, full 3D scene of millions of Gaussians for every single frame. This brute-force method results in an apocalyptic explosion of data. A short recording quickly escalates into gigabytes and terabytes of raw geometry, crashing rendering software and making network distribution utterly impossible.

Smart Deformation: The Secret Sauce

To solve this, researchers looked to the principles of video compression. Traditional video codecs don't save every pixel of every frame; they only save what changes from one frame to the next (inter-frame compression).

In 4D Gaussian Splatting, the system creates one base scene—the canonical frame. Then, instead of loading new millions of dots for the next frame, a very small, highly optimized neural network is trained to learn the deformation field.

This network calculates instructions: How does this specific blob shift to the left? How does it rotate? Does it scale up or down?

By storing just one base set of Gaussians plus the mathematical instructions for how they move, the storage requirements plummet while maintaining pristine visual quality. You suddenly unlock real-time, dynamic 3D scenes. You can capture a human speaking, hair blowing in the wind, or coffee pouring into a mug, all fully explorable in 3D at 80+ frames per second.

Corporate Scenario: The Immersive HR Onboarding

Let’s ground this in a practical corporate application. Your manufacturing firm needs to train new hires on operating a highly sensitive piece of machinery.

- The Capture: You set up a multi-camera rig around an expert technician. The technician performs the complex task once.

- The Processing: The video feeds are processed into a 4D Gaussian Splat. The base geometry is mapped, and the deformation network learns the technician's exact movements.

- The Deployment: The final 4D asset is uploaded to your company’s learning management system.

- The End-User Experience: A new hire puts on a VR headset. They are standing in the virtual room with the technician. Because this is true volumetric video, the hire can physically crouch down to look under the technician's arm, inspect the exact button being pressed from a side angle, and walk a full 360 degrees around the operation while it plays out.

The cognitive retention of this type of spatial learning dramatically outpaces reading a manual or watching a flat safety video.

Overcoming the Storage Bottleneck

While smart deformation drastically reduces file sizes compared to the brute-force method, 4D capture is still incredibly data-intensive.

When A$AP Rocky’s production team shot the music video for "Helicopter" using volumetric capture, the 56-camera rig generated a staggering 10 terabytes of raw data for just a few minutes of footage. Even the most well-funded IT departments would balk at housing that kind of file architecture for routine corporate marketing assets.

The Great Compression Race

To make 4D Splatting accessible outside of massive Hollywood studios, a highly competitive compression race is currently underway:

- Niantic's SPZ Format: Niantic (the creators of Pokémon GO) recently open-sourced a format called SPZ. It reduces the file size of Gaussian splats by roughly 90% with almost no visible loss in quality. It functions much like a JPEG compressor does for flat images.

- Industry Standardization (glTF): The Khronos Group—the consortium behind vital web standards like OpenGL—officially adopted the SPZ format into the glTF standard.

- Next-Gen Algorithms: Academic papers and proprietary tools like MEGA and HAC++ are currently pushing towards 100x compression rates, factoring out unnecessary data and optimizing how the geometry is stored.

Pro Tip for Developers: The integration into glTF is a watershed moment for corporate IT. It means 3D Gaussian Splats are no longer isolated academic experiments. They can be natively embedded into standard web browsers, e-commerce platforms, and existing enterprise asset management systems just as easily as a standard PNG or MP4.

The Proof is in the Production: Real-World Applications

This isn't theoretical technology ten years out; it is being actively deployed in massive, high-stakes environments right now. Exploring these use cases provides a blueprint for how enterprises can adopt the technology.

1. Hollywood Level Volumetric Performance (Superman)

For the upcoming Superman film directed by James Gunn, the visual effects studio Framestore collaborated with Infinite Realities to capture holographic messages from Superman's Kryptonian parents.

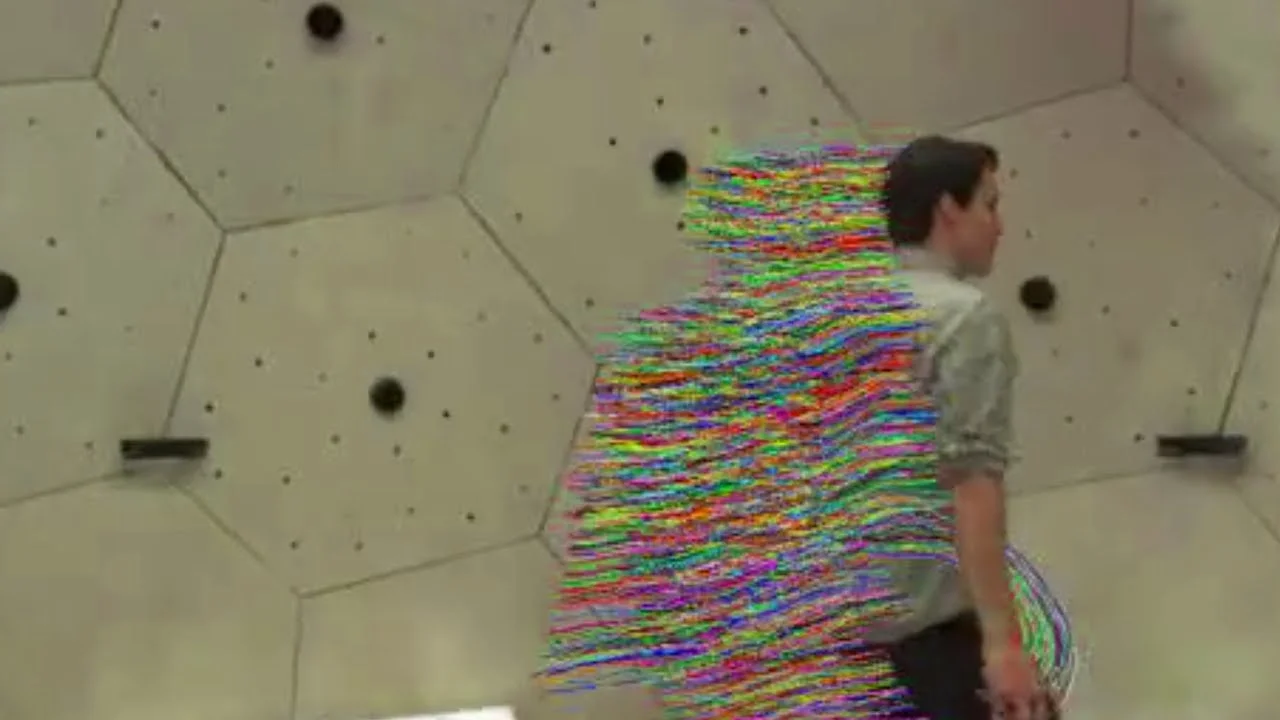

Instead of generating artificial digital doubles using traditional CGI or generative AI, they built a massive stage—the Deus—featuring 192 synchronized cameras firing at 24 frames per second. The actors performed their scenes normally. The resulting data was run through a machine learning pipeline, producing living photography.

Because the final asset was a true 4D Splat, the director could infinitely reframe the camera angles in post-production. Furthermore, visual effects artists could manipulate the 3D data natively, introducing glitches, offsets, and spatial jittering to make the holograms feel tactile and embedded in the environment.

The Corporate Translation: For high-stakes investor relations or product launch keynotes, executives can be captured volumetrically. Marketing teams can then extract an infinite number of perfectly framed 2D videos for social media, while providing the full 3D experience for VIP clients using spatial computing headsets.2. Virtual Location Scouting (Jurassic World Rebirth)

In pre-production for Jurassic World Rebirth, the team used 360-degree cameras to capture remote jungle environments in Thailand. They converted this flat 360 footage into highly detailed 3D Gaussian Splats and loaded them directly into Unreal Engine.

The director was then able to put on a VR headset in a studio in London and physically walk through the digital jungle, framing shots, checking sightlines, and planning camera movements with a virtual camera rig.

The Corporate Translation: Consider the massive logistical costs of planning a corporate retreat, designing a new flagship retail store, or orchestrating a large-scale live event. Instead of flying a dozen stakeholders to a venue, a single scout can capture the space. The entire planning committee can then jump into a shared multiplayer VR environment, walking through the photorealistic venue together to plan seating, sightlines, and branding placements.3. Next-Generation Live Broadcasting (Arcturus)

Arcturus is deploying this technology in live sports. By surrounding a baseball diamond or a basketball court with a ring of cameras, they instantly fuse the feeds into a seamless volumetric video. Broadcasters can generate free-viewpoint replays on the fly, flying the virtual camera directly over the batter's shoulder or underneath the basket—angles where physical cameras do not exist.

The Corporate Translation: Imagine a high-profile corporate product demonstration—perhaps the unveiling of a new electric vehicle chassis. Capturing the event volumetrically allows your audience to view the broadcast interactively. A client watching remotely isn't restricted to the director's cut; they can swipe on their tablet to orbit the vehicle live as the presenter is speaking.The Future: Integrating the Holodeck into B2B

We are rapidly approaching an era where the distinction between capturing reality and simulating reality completely dissolves. We are effectively building the foundation for the "Holodeck."

The final frontier for this technology is environmental integration, specifically path-traced relighting. Right now, a Gaussian Splat perfectly captures the lighting of the room at the exact moment it was recorded. If you take a 4D capture of a person recorded in a bright, sunny studio and drop them into a dark, moody virtual environment, they will still look brightly lit. The lighting is baked in.

However, rendering engines are evolving to solve this. OTOY's OctaneRender has announced that its 2026 release will feature the industry's first commercial path tracer with native Gaussian splat support.

This means you will be able to bring a 4D volumetric capture of your CEO into a 3D rendering engine, and the software will dynamically relight them. It will generate accurate shadows, allow for cinematic depth of field, and apply global illumination. You can place a living, breathing capture of a real human into any virtual environment, and the lighting will react as if they are physically standing there.

Structuring Your Organization for the Spatial Web

The shift from 2D video to 4D spatial environments requires a fundamental rethinking of how your organization approaches digital assets. To prepare, corporate leaders should consider the following steps:

- Audit Your Visual Asset Pipeline: Understand where your organization spends the most time and money on video reshoots or 3D rendering. These bottlenecks are prime candidates for volumetric workflows.

- Investigate Cloud Infrastructure: Handling massive point-cloud and splat data requires robust cloud architecture. Begin discussions with your IT department regarding data storage, transfer protocols, and edge computing capabilities.

- Experiment with Web-Based Spatial Tools: You do not need a 192-camera rig to start. Tools like Luma AI and Polycam allow you to capture basic 3D Gaussian Splats using nothing but a modern smartphone. Encourage your creative teams to begin testing these workflows today.

- Embrace Standardization: As you build out a library of digital twins and 3D assets, ensure your vendors are delivering files in standardized, highly compressed formats like glTF and SPZ to ensure cross-platform compatibility.

We are witnessing the death of flat pixels and the birth of living, explorable digital worlds. The organizations that figure out how to leverage 4D Gaussian Splatting for communication, training, and sales will not just capture attention—they will allow their audience to step directly inside their brand.

What process in your daily corporate workflow would benefit the most from being able to physically walk around inside a frozen moment of time? Drop your use cases and thoughts in the comments below!